Developers get good press. Widely lauded as kingmakers and heroes of the new digital revolution, software developers are in short supply, high demand and are globally recognised as being key to the new fabric of computing we are building across the web and the cloud.

But throughout their recent reign in tech, things have broadened; the operations function that works to underpin, manage and facilitate developer needs has been championed and brought more closely into line with software programmers’ workflow processes.

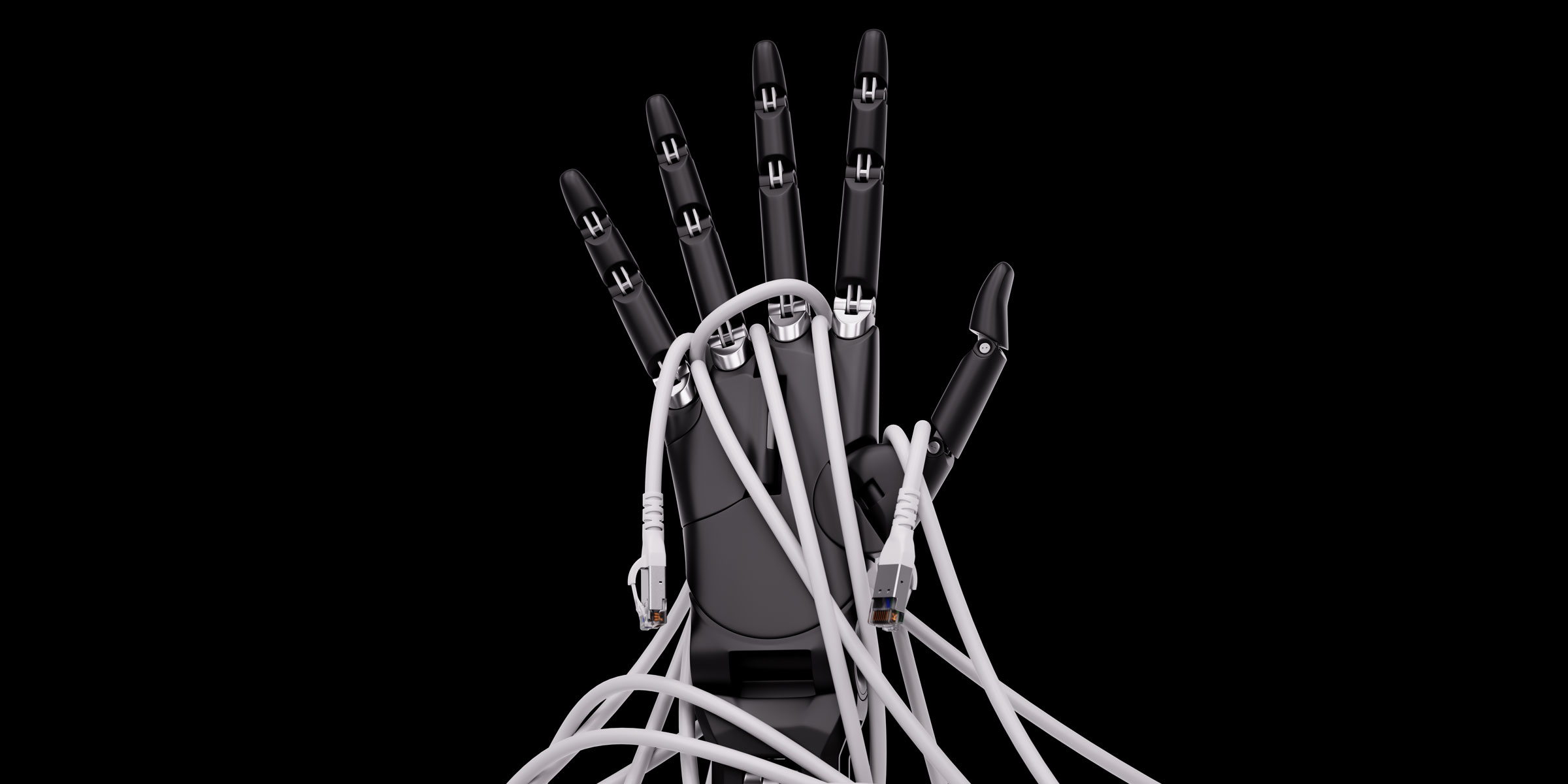

What has happened here has a special name – and of course we’re talking about DevOps. This is the portmanteau pairing of Dev (developers) and Ops (operations) in a new approach to workplace culture designed to enable these traditionally unwelcome bedfellows to live together better.

Developers want speed, functionality and choice of software tools. Operations wants security, compliance and control. The two worlds don’t always match. With DevOps, both teams can more productively work to an effective middle ground.

We need this pretext if we are to understand what is happening inside the new compute engines being created to serve AI and the machine learning (ML) functions that drive it. What comes next is MLOps.

Welcome to MLOps

Now a part of the way progressive cloud-native IT departments are looking to embrace automation and autonomous advantage, MLOps has a slightly unfortunate and possibly confusing name.

MLOps is not traditional Ops (i.e. database administration, sysadmin and so on) powered by ML. Instead, MLOps is the term we use to denote the operations and functions needed to make ML work properly.

So this means that MLOps is all about creating an ML operations pipeline to ensure we channel and manage the right data and connections into our machine brains. If we have good MLOps, then we have smart ML – and that paves the way to AI that is truly intelligent.

In terms of working practice, MLOps is all about feeding ML properly. This means it includes elements such as data ingestion, data wrangling, information management and data manipulation. Closely related to the extract, transform and load tasks that need to be executed to make an organisation’s data pipeline work effectively, MLOps also includes ML work related to feature selection, training, testing, deployment and monitoring.

What we’ve seen in the past few years is models done wrong and gone wrong – Moses Guttmann, ClearML

At the risk of attempting to cover off a short history of MLOps data science, let’s just look inside a couple of the finer points involved in MLOps mechanics.

To create great ML models, teams need to be able to track every part of the ML experimentation process as they look to automated definable repeatable tasks. This means being able to log, share and version control all experiments as the ML pipeline starts to form and ultimately benefit from being orchestrated.

“MLOps helps solve problems like that by monitoring model performance and managing model drift before problems occur,” according to Moses Guttmann, CEO and co-founder of ClearML, a company that styles itself as a frictionless, unified, end-to-end MLOps platform specialist. “ML engineers in charge of models in production can monitor them in real-time and see if or when they should be retrained on new data.”

As we know, ML is powerful and thus it needs fuel to burn. Teams will need to think about cloud and system resource allocation and scheduling in order to control costs. As the ML experimentation process becomes more esoteric and the team’s ML models get more sophisticated, more use of unstructured data can be brought into play and new previously untapped innovations may open up.

MLOps and ERP

So then, what more do we need to know about MLOps and what impact does it have on the ERP stream? In our world, ERP has only been truly AI-augmented for perhaps a decade, so is the machine intelligence we need getting the right operations-level consideration and support?

“Today we can see that ERP is an area that relies heavily on machine learning and when companies get their models right, they are more productive and profitable. But what we’ve seen in the past few years is models done wrong and gone wrong,” as Guttmann explains.

The CEO says that one recent resource planning example that played out at enterprise level was the abrupt and unexpected changes brought about by COVID-19. As we know, this disruption greatly impacted companies’ abilities to plan and update their ML models quickly enough, leading to the now well-known global supply chain issues.

“Given the scale and velocity of today’s global business, I believe MLOps is really the only way large companies will be able to stay ahead of issues, meet demand and to run efficiently.”

Although MLOps-rich AI for ERP could be arguably applied to any industry vertical, key growth markets are thought to include healthcare and healthtech, retailtech, advertising and marketing adtech and martech, alongside manufacturing and others.

Customers are forced to invest in multiple tools, creating a fragmented experience for end users.

Fragmented point solutions

Anyone questioning the state of this sector of technology fabric and thinking, “Okay, but why all the fuss now?”, is justified in posing the question.

The answer is quite straightforward, and it helps clarify and validate where we are today with MLOps. The rise of ML in the modern era of AI (i.e. the real device deployments of today, not the fanciful AI dabbling that first happened in the 1980s) has parallels with the post-millennial age of cloud.

Too much happened in too many places, too fast, with too little integration and with too little consideration for scalability and interoperability.

This reality meant that ML has developed in a scattershot fashion, leading to closed-off ‘point’ solutions (i.e. single purpose, narrow, overly proprietary etc.) and fragmented semi-platforms that failed to connect in an end-to-end fashion.

ClearML and others in this field often use this term end-to-end as some kind of marketing label or generalised affirmation of functionality, but here it really means something. In this context, end-to-end takes us from the initial point of data ingestion, through to processing and analytics inside intelligence engines and onwards to the user (or machine) endpoint of use.

“Every component of (our product) integrates seamlessly with each other, delivering cross-department visibility in research, development and production,” says Guttmann. “Many machine learning projects fail because of closed-off point tools that lead to an inability to collaborate and scale. Customers are forced to invest in multiple tools to accomplish their MLOps goals, creating a fragmented experience for data scientists and ML engineers – and ultimately for the end users, be they ERP professionals or others.”

The central issue emanating from this discussion – even if we get over the fragmented point solutions hurdle – is that the ML data science team does ML in one corner, while the ERP team does ERP in the other. It’s a scenario that’s frightening redolent of DevOps all over again.

The current proliferation of MLOps is of course meant to address not only the departmental disconnects that exist in any given enterprise, but also the information management processes that exist in this space.

There’s no point in trying to use smart ‘thinking’ technologies if you don’t think smart about how they operate. Organisations serious about using AI will now need to think about the provenance and process behind their ML pipelines – and this is precisely the pressure point at which MLOps is applied. Clever really, isn’t it?